5 considerations for migrating enterprise manufacturing data to the cloud

As more facilities migrate data to the cloud (data that resides on services in data centers in multiple locations), the options can become overwhelming, and misconceptions and confusion often increase. Today’s plant professionals (not just information technology) have learned to refer to many sources from social media and trade publications to product manufacturers’ websites and online training course sources in order to discern what is truly useful.

Despite this learning curve, the fundamental questions are mostly the same as they have been for years. Here are five considerations to help make decisions about how cloud technology might drive improvements at your facility.

1. Define where your organization wants to be in five years.

Are your customers demanding more online portals to see order status and where their work is in the production cycle? Or do they simply require a good summary report viewable online after products or services have been delivered?

Do you need to recruit people with different skill sets who are comfortable with new technologies? Once the domain of pure IT enterprises, there is a demand for people skilled in programming languages like Python and JavaScript, operating systems such as Linux, and traditional x86 platforms. Data analytics tools are rapidly expanding; programs such as Power BI, Splunk, and R come to mind. Even the overlooked data analytics prowess of Microsoft Excel is a crucial skill. Regarding education, a college degree is valuable, but completing an intensive certification program can be just as useful in the right situation. Learning can be just as effective online as in the traditional classroom, especially in IT and application development.

Your current employees have untapped potential to learn and update new skills. The attitude of “If we train them, then they will just leave for the next slightly higher-paying position” is short-sighted. It is well known that compensation is not the only thing that impacts employee retention. Employee development and company growth go hand-in-hand; trained employees will be better prepared for future company growth.

All these things are important, but it is crucial to ensure your existing base infrastructure is ready for the next level. Has your team thoroughly reviewed your automation equipment, such as programmable logic controllers (PLCs), robotics, variable frequency drives (VFDs), servos, and human-machine interfaces (HMIs) to ensure those devices have updated firmware, application management, and overall connectivity from the plant floor to central offices?

2. Start with a realistic pilot project.

A manufacturing facility must still support existing customers, while finding newer ways to do things and setting up migration without total disruption. Find a situation where there is a known problem, identify the data, and put in a small test project. For example, if a section of a plant with machine downtime has been linked to unstable power, that data can be collected.

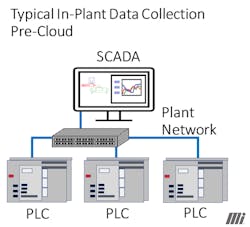

It is always important to do a “reality check” and determine the path of the data manually. Sometimes a high-powered SCADA system connected to a SQL database gives an entire organization visibility to essential data (see Figure 1). Add the fact that most large organizations have a sophisticated and secure remote VPN access system, and you will realize the same data availability as a cloud system.

3. Be careful about shifting to the latest communication protocols and technologies if you don’t have the resources.

For example, the message queuing telemetry transport (MQTT) protocol has been around for years and is recognized globally as an excellent way to implement internet of things (IoT) solutions. Sometimes just the ability to connect a Modbus/TCP controller with data to a dashboard is a good start.

4. Make sure your organization knows people in the industry you can trust.

There are a lot of new companies that may have excellent solutions ready to test. However, just as many startups at that level may have failed. You will likely have relationships with qualified partners such as system integrators, IT consultants, highly technical distributor partners, and vendors who can help you with these technologies.

5. A word about AWS, Microsoft Azure, and other deployments.

Many of you may remember that back in the 1990s, if you wanted to run CRM software, you had to install a series of disks (if you were lucky, a single CD) and you could only run this powerful tool on your desktop or laptop. ACT for Windows was an excellent example of CRM software. Fast-forward to present times, and most of us have used CRM software such as Salesforce without a second thought to the fact that we are using cloud-based software. Now, we use tools on Microsoft 365 completely online without downloading the respective applications, such as Word or Excel, onto our laptops.

Regarding cloud applications, there are two dominant entities: Amazon Web Services (AWS) and Microsoft Azure. It is important to stress that everyone in today’s world needs to be familiar with their capabilities, not just the traditional technical staff (IT, controls engineers, maintenance). That is because the tools are more accessible, and nearly everyone needs to retrieve and often customize how they get the data. Without comparing the two, there are commonalities important to know:

- The ability to connect to edge hardware. Most automation providers promote small industrial computers or HMIs that can gather data from sensors, PLCs, robots, etc. These devices can perform local processing of that data and immediately send it to another location. The destination can be on-site (often referred to as “on-premise”) or on a cloud platform such as AWS or Azure.

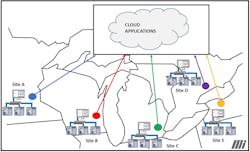

- The ability to create and/or host dashboards to show the status of several different geographical sites at a top-level view (see Figure 2). Also, many equipment manufacturers are starting to build vertically integrated specialized clouds.

- Having intelligent databases securely stored in the cloud. Azure and AWS have extensive tools for creating and maintaining databases, such as Azure SQL Database and Amazon DynamoDB.

- Platforms to help develop and test scalable applications completely in the cloud environment, such as Amazon EC2 and various DevOps tools from Azure.

Where to start?

This is a great time to find like-minded manufacturing professionals with the same long-term goals. Migrating to the cloud is a gradual process, but worth it as the ways we can collect and interpret data grow. The more an organization learns and implements, the more it can attract and grow its most important resource: employees and their knowledge.

About the Author

John Kan

Connectivity Products Manager, Motion Ai

John Kan is connectivity products manager with Motion Ai. He has 28 years of experience in factory automation, focusing on industrial Ethernet. Kan’s goal is to aid customers with connecting from the factory floor to destinations both locally and in the cloud. He is committed to sharing knowledge and enthusiasm with the next generation of automation professionals. Kan also brings a unique perspective with four years of experience in Europe working in HMI and SCADA. He earned a BSME in Engineering from Kettering University (formerly General Motors Institute).