I was recently asked a question by a person I provide mentoring to in the utilities industry of the UK. On face value it appeared to be an obvious way of looking at things:

"If you have been performing condition monitoring for a while, say two years, and you have found nothing, then you should be able to extend the frequency of the task or eliminate it altogether, right?"

His goal was to see how the company could reduce its maintenance costs through elimination of wasteful and unnecessary activities; a noble enough goal and one that is shared by many managers around the world. The logic makes sense. If we are doing this and finding nothing, then why are we doing it?

Many people think this way, and many managers make decisions based on this sort of approach. Unfortunately, it is the exact opposite of how condition monitoring and asset maintenance principles actually work.

To get to the bottom of this seemingly counter-intuitive approach, we first need to understand some of the basics of why a condition monitoring task would be chosen, and then understand how the frequency of this task could be determined.

RCM points us to two sets of criteria that a task must comply with before it can be selected. First it needs to be “applicable” – able to be applied physically. The other test is to see whether or not the task is "effective"- whether it is likely to reduce the consequences of failure, or probability, to a level that makes it a worthwhile application.

The first issue, applicability, is where we find the answer to our question. One of the first criteria in this issue is whether or not there is a “P-F interval,” establishing what it is and whether or not it is consistent.

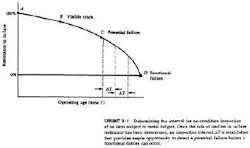

The P-F interval is the time between when the potential-failure (P) is able to be detected, and when the functional-failure (F) occurs. The graphic should make this clearer. This has been copied directly from the original RCM report.

This is a good description for just about any failure that condition monitoring could be useful for detecting.

As an example, I will look at the failure of a roller bearing due to metal fatigue, using the points on the curve as a guide.

Metal fatigue is the effect of taking a paper clip and folding it back on itself several times. What happens? The metal within the paperclip weakens, becomes fatigued, and then breaks altogether. What we don’t see are the range of microscopic effects and events that lead up to the paperclip breaking.

It is the same with a bearing. As we have reviewed previously, over time the metal within the races, balls and other elements weakens. (We will avoid getting into the discussion about why it would weaken for now) While it remains sub-surface, that is within the walls of the race for example, we often do not even know that it is developing, nor are we able to do anything to detect it.

However, once it actually breaks the surface, say at point B, then we can start to detect it via changes in vibration levels. As the resistance to failure deteriorates even further, point C, then we may be able to detect it via different means. Changes in heat, noise, amperage draw, and equipment performance are all examples of differing means to predict failure.

The time remaining between when we actually predict the warning signs of failure, and when it actually fails functionally (no longer able to do what we require of it), is the P-F interval.

So, the frequency of any task that sets out to detect the warning signs of failure needs to be less than the P-F interval. This is a fundamental issue and one that is often misunderstood. Working through the criteria for applicability and effectiveness are what guides us as to whether or not to apply a task. It has nothing to do with whether we find anything or not.

Our decision logic (applicability and effectiveness) have already told us that it is wise for us to apply the task, because if we don’t make the efforts to predict it, then we will be faced with undesirable consequences of failure.

If we take it upon ourselves to lengthen the frequency, or worse, to remove the task altogether, merely because it has not detected anything yet, then we are setting ourselves up for an unpredicted failure. For example, if we extend the frequency to six months or greater, then we will detect the warning signs of failure based solely on good fortune. If we remove the task altogether, we are destined for the exact equipment failure that this task was designed to protect us from.

I hope this is of use in either defending the condition monitoring tasks you already have in place, or in helping you to determine the frequency of on-condition tasks that you are currently working with. There are a whole range of additional factors that should also be considered such as the accuracy of the task, the severity of the consequences etcetera, but this should help as a basic guide.